The last post on some notes to myself, made me curious on how easy/difficult it would be to generate a very simple solution to make DuckDB accessible from remote locations. There are probably far better projects out there to remotely access your DuckDB files, but I mainly wanted to have an mTLS first solution, with no options to expose it without client certificates. In addition, I also wanted to keep it simple. You can find the code over here and I am trying/tried to keep the commit history informative in terms of what AI has been improving.

My main curiousity driven questions were:

- Does it reduce time?

- How ‘secure’ is the first version of generated code?

- Can AI aid in iteratively improve the code?

Fair warning; I’m no AI nor LLM expert, I’m just experimenting as I go. For those wondering I’m using vscode with the github copilot plugin set on auto selection for models. I keep gemini chat in parallel to ask some questions and contrast the answers/solutions.

But, but but…your setup is missing all the context and/or agent files! Yes, I like re-inventing the wheel / making mistakes myself to better understand why other advise in a certain direction.

Does it reduce time?

At first sight it seems like it does, cause boy does it type fast! However, due to the lacking context / agent files (I only used simple prompting) you do have to tidy up / fix a lot after the first generated code. I guess it depends on how you measure time, but one way or another you need to invest time to get the solution you want in the way that you want it.

How ‘secure’ is the first version of the generated code?

I have to admit i didn’t include a ‘make sure it is secure’ part in my simplistic prompting. So I guess I should not be surprised the code isn’t secure by default. Reminds me of when I started programming during which I made (I still do) a lot of security mistakes. For example it generated certificates with sequential serial numbers, created files and directories with insecure permissions, didn’t lock down the DuckDB environment, etc. In a way it does mimic programmers who are not fully familiar with security intricancies of not only the language in which they are developing, but also the rest of the stack used for the solution being build.

Can AI aid to iteratively improve the code?

This it seems to be able to do, however don’t try to big of a leap in a single shot. For example, after the first version of the single file solution worked I wondered if I could single-shot it into a more maintainable version. I decided to have it plan & execute on creating tests and making it modular by splitting up the code. It got stuck on several tests in endless loops due to not overseeing requirements that had to be implemented first.

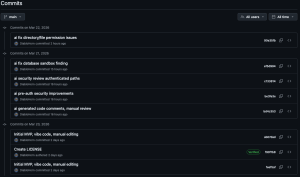

In smaller chunks it does a pretty good job of improving code and making suggestions. So I decided to improve the code bit by bit and trying to mirror this in the commit history.

Idea behind this being to easily go back in time and see the type of improvements that AI suggests and makes when specifically prompted to review and improve code.

I have yet to split up the code, but then again, maybe this project is good enough like this for the learning purpose I setout to achieve.